Apple has consistently pushed the boundaries of technological innovation, and its recent foray into artificial intelligence (AI) is no exception. With advancements like Siri and increasingly sophisticated machine learning algorithms, the company is exploring the potential of AI to enhance user experiences across its ecosystem. Nevertheless, questions remain about the true extent of Apple's AI reasoning capabilities. Can these systems truly understand and analyze complex information, or are they simply executing pre-programmed tasks? This article delves into the intricacies of Apple's AI technology, examining its strengths and limitations in the realm of reasoning.

One key area of focus is the ability of Apple's AI to produce coherent and logical responses to user queries. While Siri has made significant strides in understanding natural language, its skill to engage in nuanced conversations and solve complex problems remains limited. Furthermore, it is unclear whether Apple's AI models possess the capacity for true comprehension, or if they are merely mimicking human-like behavior through pattern recognition and statistical analysis.

- Moreover, the issue of bias in AI algorithms remains a significant concern. As with any technology trained on vast datasets, Apple's AI systems could potentially perpetuate existing societal biases, leading to unfair or discriminatory outcomes.

- Countering these ethical challenges will be crucial for Apple as it continues to develop and deploy AI technologies.

Unveiling the Limitations of Artificial Intelligence: An Apple Perspective

While iPhones has made remarkable strides in deep intelligence, it becomes crucial to acknowledge the inherent restrictions of this technology. Even though AI's profound capabilities in areas like data analysis, there are fundamental aspects where human intelligence remains indispensable. , Notably, AI algorithms can struggle with nuance reasoning, innovation, and ethical considerations.

- , Additionally

- Deep learning can be susceptible to discrimination inherent in the data it is trained to, leading to inaccurate outcomes.

- Therefore, Apple must strive for accountability in AI design and actively work to resolve these limitations.

, Finally, a balanced approach that leverages the strengths of both AI and human judgment is essential for achieving responsible outcomes in this domain of AI.

The Cupertino tech giant AI Study: A Deep Dive into Reasoning Constraints

A recent investigation by Apple delves into the intricacies of reasoning constraints within artificial intelligence systems. The research sheds light on how these constraints, often unstated, can shape the performance of AI models in sophisticated reasoning tasks.

Apple's analysis highlights the importance of explicitly defining and implementing reasoning constraints into AI development. By doing so, researchers can mitigate potential inaccuracies and enhance the robustness of AI systems.

The study proposes a novel framework for structuring reasoning constraints that are simultaneously powerful and transparent. This framework seeks to facilitate the development of AI systems that can reason more coherently, leading to more reliable outcomes in real-world applications.

Reasoning Gaps in Apple's AI Systems: Challenges and Opportunities

Apple's foray into the realm of artificial intelligence (AI) has been marked by notable successes, showcasing its prowess in areas such as natural language processing and computer vision. However, like all cutting-edge AI systems, Apple's offerings are not without their limitations. A key challenge lies more info in addressing the inherent shortcomings in their reasoning capabilities. While these systems excel at executing specific tasks, they often falter when confronted with complex, open-ended problems that require refined thought processes.

This limitation stems from the essence of current AI architectures, which primarily rely on probabilistic models. These models are highly effective at identifying patterns and making forecasts based on vast datasets. However, they often fall short the capacity to interpret the underlying meaning behind information, which is crucial for sound reasoning.

Overcoming these reasoning shortcomings presents a formidable task. It requires not only advances in AI algorithms but also innovative approaches to structuring knowledge.

One promising path is the integration of symbolic reasoning, which employs explicit rules and logical processes. Another avenue involves incorporating common sense knowledge into AI systems, enabling them to deduce more like humans.

Addressing these reasoning shortcomings holds immense opportunity. It could unlock AI systems to tackle a wider range of challenging problems, from scientific discovery to customized learning. As Apple continues its journey in the realm of AI, closing these reasoning deficiencies will be paramount to fulfilling the true potential of this transformative technology.

Examining the Limits of AI Logic: Findings from an Apple Research Initiative

An innovative research initiative spearheaded by Apple has yielded intriguing findings into the capabilities and constraints of artificial intelligence logic. Through a series of comprehensive experiments, researchers delved into the depths of AI reasoning, unveiling both its strengths and potential shortcomings. The study, conducted at Apple's cutting-edge research labs, focused on examining the performance of various AI algorithms across a broad range of problems. Key conclusions highlight that while AI has made significant progress in areas such as pattern recognition and data analysis, it still faces difficulty with tasks requiring abstract reasoning and common sense understanding.

- Additionally, the study sheds light on the effect of training data on AI logic, underscoring the need for inclusive datasets to mitigate prejudice.

- As a result, the findings have significant consequences for the future development and deployment of AI systems, calling a more nuanced approach to addressing the obstacles inherent in AI logic.

Apple's Exploration into : Illuminating the Terrain of Cognitive Biases in Machine Learning

In a groundbreaking endeavor to unravel, Apple has released a comprehensive study focused on the pervasive issue of cognitive biases in machine learning. This ambitious initiative aims to pinpoint the root causes of these biases and formulate strategies to address their detrimental impact. The study's findings have the power to revolutionize the field of AI by promoting fairer, more trustworthy machine learning algorithms.

Apple’s researchers are leveraging a range of advanced techniques to investigate vast datasets and detect patterns that demonstrate the presence of cognitive biases. The study's meticulous approach encompasses a wide spectrum of areas, from image recognition to risk assessment.

- By shedding light on these biases, Apple's study aims to redefine the landscape of AI development.

- Furthermore, the study's findings may offer practical guidance for developers, policymakers, and scientists working to create more responsible AI systems.

Charlie Korsmo Then & Now!

Charlie Korsmo Then & Now! Katie Holmes Then & Now!

Katie Holmes Then & Now! Keshia Knight Pulliam Then & Now!

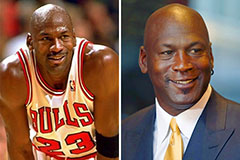

Keshia Knight Pulliam Then & Now! Michael Jordan Then & Now!

Michael Jordan Then & Now! Catherine Bach Then & Now!

Catherine Bach Then & Now!